Barbara Liskov—the brilliant Turing Award winner whose career inspired so much modern thinking around distributed computing—was fond of calling out the “power of abstraction” and its role in “finding the right interface for a system as well as finding an effective design for a system implementation.”

Liskov has been proven right many times over, and we are now at a juncture where new abstractions—and eBPF, specifically—are driving the evolution of cloud native system design in powerful new ways. These new abstractions are unlocking the next wave of cloud native innovation and will set the course for the evolution of cloud native computing.

Cloud native challenges: complexity and scale

Before we dive into eBPF, let’s first examine what cloud native is and why it needs to evolve.

Cloud native embraces a container model where a single kernel becomes the common denominator for managing many networking objects. We see related trends, like networks becoming namespace-based, where full-blown VMs are being replaced by containers or lightweight VMs. Cloud native shifts the scale and scope from a few VMs to many containers with higher per-node container density for efficient resource use and shorter container lifetimes. These dynamic IP pools for containers also have high IP churn.

The challenges don’t end there.

Once you have stood up and bootstrapped your cluster there are “Day 2” challenges like observability, security, multicluster and cloud management, and compliance. You don’t just move to a cloud native environment with a flick of a switch. It’s a progressive journey.

Once you have a cloud native environment set up, you will face integration requirements with external workloads (e.g., through more predictable IP addresses via service abstractions or egress gateways, like BGP for pod networking, CIDRs, services, and gateways). You will also have to deal with the successive migration toward IPv6-only clusters for better IAM flexibility, and NAT46/64 for interaction with legacy workloads and be able to connect multiple clusters on/off-prem in a scalable manner, with topology-aware routing and traffic encryption, and so much more.

These problems are only going to grow larger, with Gartner estimating that by 2025 over 95% of new digital workloads will be deployed on cloud native platforms, up from 30% in 2021.

Limitations of the Linux kernel building blocks

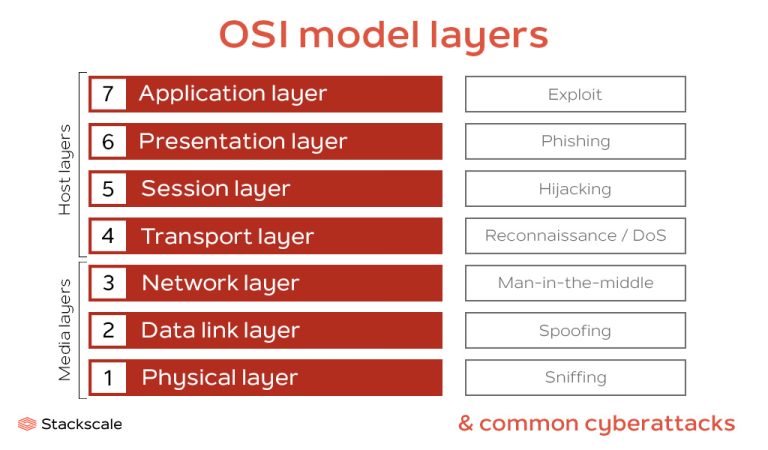

The Linux kernel, as usual, is the foundation to solving these challenges, with applications using sockets as data sources and sinks and the network as a communication bus. Linux and Kubernetes have come together as the “cloud OS.”

But cloud native needs newer abstractions than currently available in the Linux kernel because many of these building blocks, like cgroups (CPU, memory handling), namespaces (net, mount, pid), SELinux, seccomp, netfiler, netlink, AppArmor, auditd, perf, were designed more than 10 years ago.

These tools don’t always talk together, and some are inflexible, allowing only for global policies and not per-container policies. They don’t have awareness of pods or any higher-level service abstractions, and many rely on iptables for networking.

As a platform team, if you want to provide developer tools for a cloud native environment, you can still be stuck in this box where cloud native environments cannot be expressed efficiently.

eBPF: Building abstractions for the cloud native world

eBPF is a revolutionary technology that allows us to dynamically program the kernel in a safe, performant, and scalable way. It is used to safely and efficiently extend the cloud native capabilities of the kernel without requiring changes to kernel source code or loading kernel modules.

eBPF:

- Hooks anywhere in the kernel to modify functionality and customize its behavior without changing the kernel’s source

- Programs are verified to safely execute to prevent kernel crashing or other instabilities

- JIT compiled for near native execution speed

- Allows addition of OS capabilities at runtime without workload disruption or node reboot

- Shifts the context from user space in Kubernetes into the Linux kernel

These capabilities allow us to safely abstract the Linux kernel and make it ready for the cloud native world.

eBPF abstractions for the cloud native revolution

Next let’s dive into 10 ways the eBPF abstraction is helping evolve the cloud native stack, from speeding up innovation to improving performance.

#1. eBPF speeds up kernel innovation

Adding a new feature or functionality to the Linux kernel is a long process. In the typical patch lifecycle, you need to develop a patch, get it merged upstream, then wait until major distributions get released. Users typically stick to LTS kernels (for example, Ubuntu is typically on a two year cadence). So innovation with the traditional model requires kernel modules or building your own kernels, leaving most of the community out. And the feedback loop from developers to users is minimal to nonexistent. eBPF managed to break this long cycle by decoupling from kernel releases. For example, changes in Cilium can be upgraded on the fly with the kernel running and work on a large range of kernel releases. This allows us to add new cloud native functionality years before it would otherwise be possible.

#2. eBPF extends the kernel but with a safety-belt on

New features can increase functionality, but also bring new risks and edge cases. Development and testing costs much more for kernel code versus eBPF code for the same functionality. The eBPF verifier ensures that the code won’t crash the kernel. Portability for eBPF modules across kernel versions is achieved with CO-RE, kconfigs, and BPF type info. The eBPF flavor of the C language is also a safer choice for kernel programming. All of these make it safer to add new functionality to the kernel than patching directly or using a kernel module.

#3. eBPF allows for short production feedback loops

Traditional feedback loops required patching the in-house kernel, gradually rolling out the kernel to the fleet to deploy the change, starting to experiment, collecting data, and bringing the feedback into the development cycle. It was a very long and fragile cycle where nodes needed to restart and drain their traffic, making it impossible to move quickly especially in dynamic cloud native environments. eBPF decouples this feedback loop from the kernel and allows atomic program updates on the fly, dramatically shortening this feedback loop.

#4. eBPF provides building blocks in the kernel instead of reinventing the userspace wheel

Instead of requiring rewrites of large parts of the user space stack, eBPF is able to piggyback on parts to the kernel and use them as-is while making integration dramatically easier. eBPF adds building blocks to the kernel that are too complex for other kernel subsystems, especially for new cloud native use cases. With eBPF, Cilium was able to easily add a NAT 46/64 gateway to connect IPv6-only Kubernetes clusters to IPv4-based infrastructure.

#5. eBPF allows you to fix or mitigate kernel bugs on the fly

Recently, eBPF was used to fix a kernel bug in the veth (virtual Ethernet) driver that was affecting queue selection. (See the eBPF Summit talk, All Your Queues Are Belong to Us.) This on-the-fly fix enabled by eBPF avoided complex rollouts of new kernels, an especially time-consuming process for cloud providers. Cloud native workloads can bring new edge cases to the kernel, but on-the-fly fixes with eBPF make packet processing more resilient and reduce the attack surface from bad actors.

#6. eBPF moves data processing closer to the source, reducing resource consumption

Traditional virtualized networking functions, such as load balancers and firewalls, are solved at a packet level. Every packet needs to be inspected, modified, or dropped, which is computationally expensive for the kernel. eBPF reframed the original problem by moving as close to the event source as possible, toward per-socket hooks, per-cgroup hooks, and XDP (eXpress Data Path), for example. This resulted in significant resource cost savings and allowed the migration from dedicated boxes to generic worker nodes. Seznam.cz was able to reduce their load balancer CPU consumption by 72x using eBPF.

#7. eBPF enables lower traffic latency

By using eBPF for forwarding, we allow many parts of the networking stack to be bypassed, greatly improving networking efficiency and performance. For example, with eBPF, Cilium was able to implement a bandwidth manager that reduced p99 latency by 4.2x. It also helped enable BIG TCP and a new veth driver replacement that lets containers achieve host networking speeds.

#8. eBPF delivers efficient data processing

eBPF reduces the kernel’s feature creep that slows down data processing by keeping the fast path to a minimum. Complex, custom cloud native use cases don’t need to become part of the kernel. They simply become more building blocks in eBPF that can be leveraged in different edge cases. For example, by decoupling helpers and maps from entry points in eBPF, Cilium was able to create a faster and more customizable kube-proxy replacement in eBPF that can continue to scale when iptables falls short.

#9. eBPF facilitates low-overhead deep visibility into the system

Given the churn in cloud native workloads, it can be difficult to find and debug issues. eBPF collectors make it possible to build low-overhead, fleet-wide tracing and observability platforms. Instead of having to modify application code or add sidecars, eBPF allows zero instrumentation observability. Troubleshooting production issues on-the-fly also can be done safely via bpftrace while allowing significantly richer visibility, programmability, and ease-of-use than old-style perf.

#10. eBPF creates secure identity abstractions for policy enforcement

In cloud native environments, eBPF allows you to abstract away from high pod IP churn towards more long-lasting identities. IPs are meaningless given that everything is centered around pod labels and that the pod lifetime is generally very short with ephemeral workloads. By understanding the context of the process in the kernel, eBPF helps abstract from the IP to provide more concrete identity abstractions. With a secure identity abstraction for workloads, Cilium was able to build features like egress gateways for short-lived pods and mTLS.

eBPF for innovation, abstraction, and performance

Cloud native is shifting the requirements for platforms that need to support higher levels of performance and scalability along with constant change. Many of the Linux kernel building blocks that support these demanding workloads are decades old. Luckily, eBPF allows us to dynamically change the kernel to create abstractions that are ready for the cloud native world. eBPF is unlocking cloud native innovation, creating new kernel building blocks, and dramatically improving the performance of application platforms.

Bill Mulligan is a Cilium maintainer and heavily involved in the eBPF ecosystem. He works at Isovalent.

—

New Tech Forum provides a venue to explore and discuss emerging enterprise technology in unprecedented depth and breadth. The selection is subjective, based on our pick of the technologies we believe to be important and of greatest interest to InfoWorld readers. InfoWorld does not accept marketing collateral for publication and reserves the right to edit all contributed content. Send all inquiries to newtechforum@infoworld.com.

Next read this:

![Most popular programming languages in 2023 [Ranking]](https://linuxpunx.com.au/wp-content/uploads/2023/09/most-popular-programming-languages-in-2023-ranking-768x533.jpg)